The thing about the internet is, if you give people an open platform, the scum will follow.

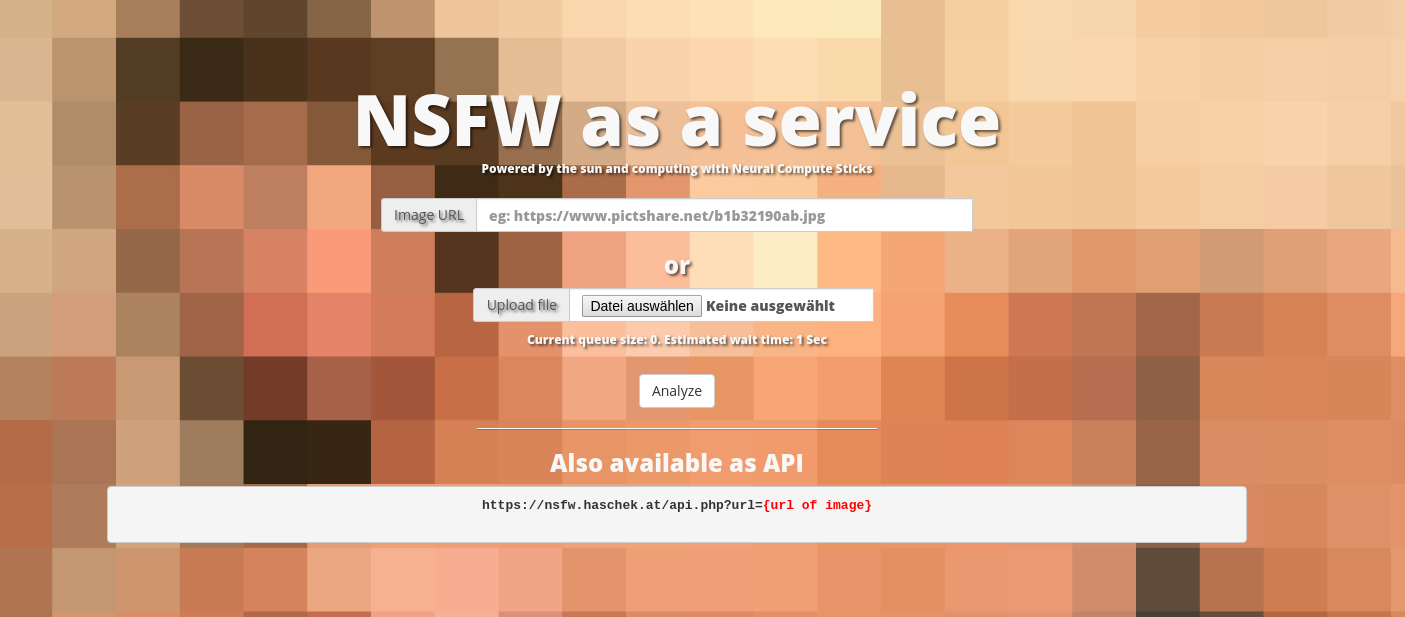

- Analyze images for pornography yourself: https://nsfw.haschek.at

- Give us an upvote on Product Hunt: https://www.producthunt.com/posts/naas

Backstory

When I first started working on my open source image hosting service PictShare I didn't think anyone but myself would use it.

I use it all the time and foremost on this blog to host the images I'm embedding. Over the years the usage has increased and with increased usage of a site where you can upload images anonymously there will be those who upload illegal things.

Not long ago it was brought to my attention that someone uploaded and linked an image that could be child pornography via PictShare.

First contact with InterPol

After confirming that the image hosted on my site was indeed a pornographic image which included a child, I called the police and asked them what to do. The policeman on the phone had no idea what an image hoster is and said I should download and print the image so I can bring it to my local police station. Erm.. not what I planned to do - he obviously had no experience with this kind of content so after a short Google search I learned that the guys from Interpol (International Criminal Police Organization) are the ones equipped with the tools for this kind of thing.

I wrote them and looked up the IP address from where the image was uploaded and they said I should just delete the image and report if the IP uploads anything else.

Was this the only case?

When I read the answer form Interpol I had a thought that didn't come to my mind before: What if this image wasn't the only one?

There are thousands of images on PictShare I can't look them through even in a year so I had to think of something else.

Deep learning to the rescue

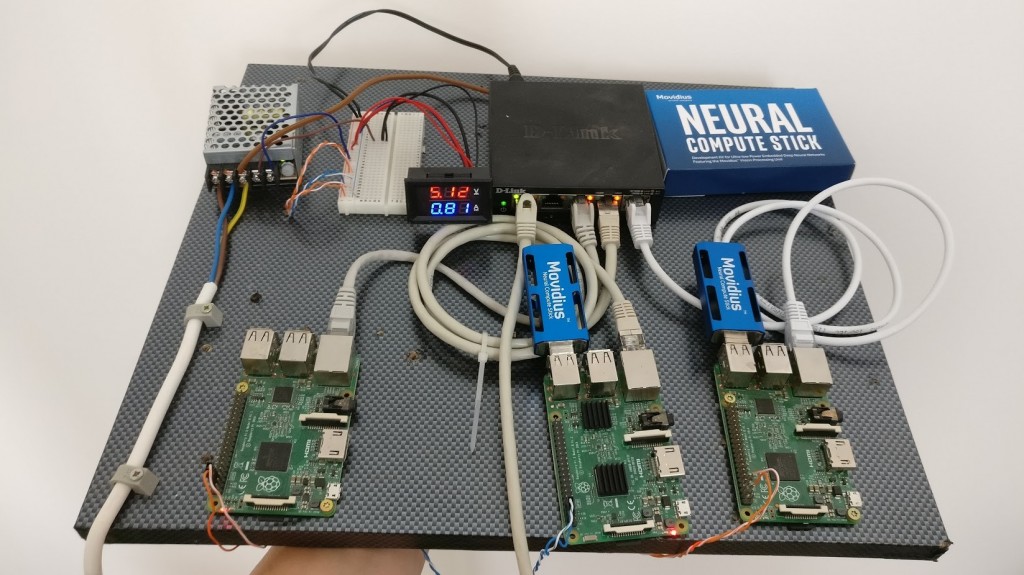

Intel recently released their Movidius neural compute stick. This is a little USB stick that can execute trained neural networks to classify images. The best thing is that it can be run in combination with a Raspberry Pi and won't use nearly as much power as a GPU would.

Using a trained neural network is a very computation intensive task and usually only very expensive hardware and fast server CPUs can do that. The Intel NCS allows low power platforms like the Raspberry Pi to use the trained model without using much power.

Finding pornography with deep learning

After compiling the NCS SDK on the Raspberry Pi (which took about 10 hours on a Raspberry Pi 3) and compiling yahoos pretrained open_nsfw model to the NCS platform I wrote a script that could successfully classify images which contained nudity or pornographic content.

Making it easy to use

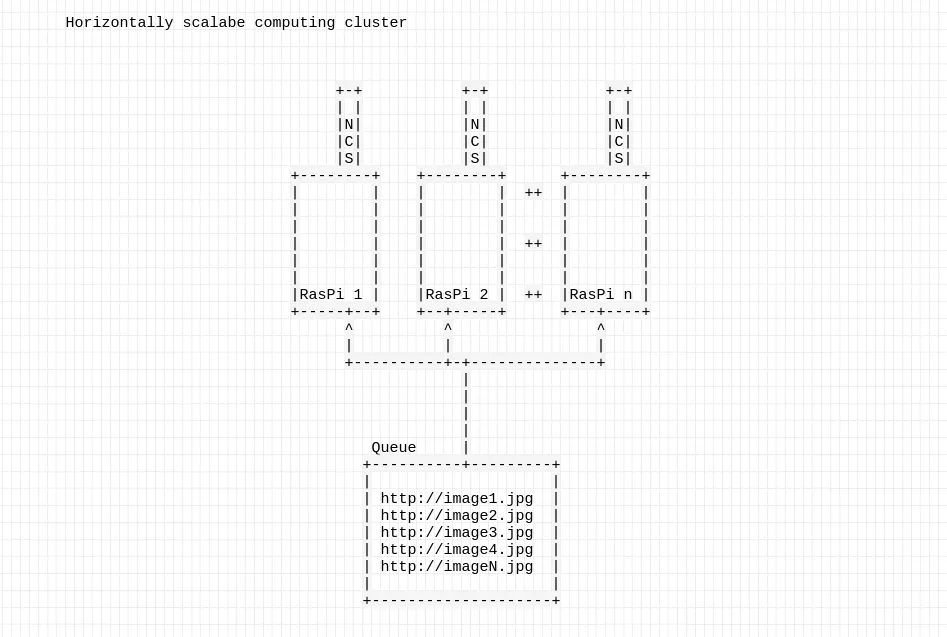

I designed this simple system where I can add as many RasPis as I want to scale the computation. There is a webinterface available to anyone via https://nsfw.haschek.at/ where files can be uploaded and classified.

All Raspberry Pis check the queue every second and if they have no workload, they download the next image and let the trained model classify it. When they're finished, they save the result back in the database and delete the image.

Reporting more child pornography

I wrote a small script that filled the computation queue with all images on PictShare and set it to report when the neural net thinks it's more than 30% sure to contain pornography.

Sadly I found 16 more images which contained child pornography. I reported all uploaders and images to Interpol and they were very happy with my help.

Expanding the project

After my successful first trials I contacted another (very) big image hosting service and offered them my help. They were very excited because they could proactively find this kind of content now instead of having to wait for reports.

Since their image database is much larger than mine was, the project ran for about a week but in the end we found what we were looking for.

We found ecactly 3292 images that contained child pornography. They asked me not to mention the name of their platform for obvious reasons but they have reported our findings to their local authorities.

In the near future I'll seek out to more image hosters and organizations to help them fight illegal content on the internet.

If you think this technology could help your company too, write me an email: christian@haschek.at

Links

- Webservice: https://nsfw.haschek.at

- This project on Product Hunt: https://www.producthunt.com/posts/naas

Comment using SSH! Info